How a Tsunami of Information Inspired the Revolt of the Public

Gurri explains how the tidal wave of information rising in the past few decades transformed the relationship between authority figures and the public.

Can there be a connection between online universities and the serial insurgencies which, in media noise and human blood, have rocked the Arab Middle East? I contend that there is. And the list of unlikely connections can easily be expanded. It includes the ever faster churning of companies in and out of the S&P 500, the death of news and the newspaper, the failure of established political parties, the imperial advance across the globe by Facebook and Google, and the near-universal spread of the mobile phone.

Should anyone care about this tangle of bizarre connections? Only if you care how you are governed: the story I am about to tell concerns above all a crisis of that monstrous messianic machine, the modern government. And only if you care about democracy: because a crisis of government in liberal democracies like the United States can’t help but implicate the system.

Already you hear voices prophesying doomsday with a certain joy.

***

I came to the subject in a roundabout way. I was interested in information. The word, admittedly, is vague, the concept elusive. Information theory finds “information” in anomaly, deviation, difference – anything that separates signal from noise. But that’s not what I cared about.

Media provided my point of reference. As an analyst of global events, my source material came from parsing the world’s newspapers and television reports. That was what I considered information. I also held the belief that information of the sort found in newspapers and television reports was identical to knowledge – so the more information, the better. This was naïve of me, but if I say so, understandable. Back when the world and I were young, information was scarce, hence valuable. Anyone who could cast a beam of light on, say, Russia-Cuba relations, was worth his weight in gold. In this context, it made sense to crave for more.

A curious thing happens to sources of information under conditions of scarcity. They become authoritative. A century ago, a scholar wishing to study the topics under public discussion in the US would find most of them in the pages of the New York Times. It wasn’t quite “All the news that’s fit to print,” but it delivered a large enough proportion of published topics that, as a practical proposition, little incentive existed to look further. Because it held a near monopoly on current information, the New York Times seemed authoritative.

Four decades ago, Walter Cronkite concluded his broadcasts of the CBS Nightly News with the words, “And that’s the way it was.” Few of his viewers found it extraordinary that the clash and turmoil of billions of human lives, dwelling in thousands of cities and organized into dozens of nations, could be captured in three or four mostly visual reports lasting a total of less than 30 minutes. They had no access to what was missing – the other two networks reported the same news, only less majestically. Cronkite was voted the most trusted man in America, I suspect because he looked and sounded like the wealthy uncle to whom children in the family are forced to listen for profitable life lessons. When he wavered on the Vietnam War, shock waves rattled the marble palaces of Washington. Cronkite emanated authority.

It took time to break out of my education and training, but eventually the thought dawned on me that information wasn’t just raw material to exploit for analysis, but had a life and power of its own. Information had effects. And the first significant effect I perceived related to the sources: as the amount of information available to the public increased, the authoritativeness of any one source decreased.

The idea of an information explosion or overload goes back to the 1960s, which seems poignant in retrospect. These concerns expressed a new anxiety about the advance of progress, and placed in doubt the naïve faith, which I originally shared, that data and knowledge were identical. Even then, the problem was framed by uneasy elites: as ever more published reports escaped the control of authoritative sources, how could we tell truth from error? Or, in a more sinister vein, honest research from manipulation?

Information truly began exploding in the 1990s, initially because of television rather than the internet. Landline TV, restricted for years to one or two channels in a few developed countries, became a symbol of civilization and was dutifully propagated by governments and corporations around the world. Then came cable and the far more invasive satellite TV: CNN (founded 1980) and Al Jazeera (1996) broadcast news 24 hours a day. A resident of Cairo, who in the 1980s could only stare dully at two state-owned channels showing all Mubarak all the time, by the 2000s had access to more than 400 national and international stations. American movies, portraying the Hollywood approach to sex, poured into the homes of puritanical countries like Saudi Arabia.

Commercial applications for email were developed in the late 1980s. The first server on the worldwide web was switched on during Christmas of 1990. The MP3 – destroyer of the music industry – arrived in 1993. Blogs appeared in 1997, and Blogger, the first free blogging software, became available in 1999. Wikipedia began its remarkable evolution in 2001. The social network Friendster was launched in 2002, with MySpace and LinkedIn following in 2003, and that thumping T. rex of social nets, Facebook, coming along in 2004. By 2003, when Apple introduced iTunes, there were more than 3 billion pages on the web.

Early in the new millennium it became apparent to anyone with eyes to see that we had entered an informational order unprecedented in the experience of the human race.

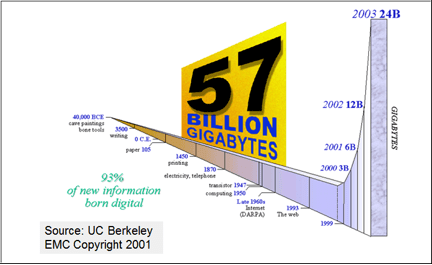

I can quantify that last statement. Several of us – analysts of events – were transfixed by the enormity of the new information landscape, and wondered whether anyone had thought to measure it. My friend and colleague, Tony Olcott, came upon (on the web, of course) a study conducted by some very clever researchers at the University of California, Berkeley. In brief, these clever people sought to measure in data bits the amount of information produced in 2001 and 2002, and compare the result with the information accumulated from earlier times.

Their findings were astonishing. More information was generated in 2001 than in all the previous existence of our species on earth. In fact, 2001 doubled the previous total. And 2002 doubled the amount present in 2001, adding around 23 “exabytes” of new information – roughly the equivalent of 140,000 Library of Congress collections.1 Growth in information had been historically slow and additive. It was now exponential.

Poetic minds have tried to conjure a fitting metaphor for this strange transformation. Explosion conveys the violent suddenness of the change. Overload speaks to our dazed mental reaction. Then there are the trivially obvious flood and the most unattractive firehose. But a glimpse at the chart above should suggest to us an apt metaphor. It’s a stupendous wave: a tsunami.

***

What was the character of the changes imposed by this cataclysmic force, this tsunami, as it swept over our culture and our lives? That was the question posed to those of us with an interest in media, research, and analysis.

From a professional perspective, I realized that I couldn’t restrict my search for evidence to the familiar authoritative sources without ignoring a near-infinite number of new sources, any one of which might provide material decisive to my conclusions. However I conducted my research, whatever sources I chose, I was left in a state of uncertainty – a permanent condition for analysis under the new dispensation.

Uncertainty is an acid, corrosive to authority. Once the monopoly on information is lost, so too is our trust. Every presidential statement, every CIA assessment, every investigative report by a great newspaper, suddenly acquired an arbitrary aspect, and seemed grounded in moral predilection rather than intellectual rigor. When proof for and against approaches infinity, a cloud of suspicion about cherry-picking data will hang over every authoritative judgment.

And suspicion cut both ways. Defenders of mass media accused their vanishing audience of cherry-picking sources in order to hide in a congenial information bubble, a “daily me.”

Pretty early in the game, the wave of fresh information exposed the poverty and artificiality of established arrangements. Public discussion, for example, was limited to a very few topics of interest to the articulate elites. Politics ruled despotically over the public sphere – and not just politics but Federal politics, with a peculiar fixation on the executive branch. Science, technology, religion, philosophy, the visual arts – except when they touched on some political question, these life-shaping concerns tended to be met with silence. In a similar manner, a mediocre play watched by a few thousands received reviews from critics with literary pretensions, while a computer game of breathtaking technical sophistication, played by millions, fell beneath notice.

Importance measured by public attention reflected elite tastes. As newcomers from the digital frontiers began to crowd out the elites, our sense of what is important fractured along the edges of countless niche interests.

The shock of competition from such unexpected and non-authoritative quarters left the news business in a state of terminal disorientation. I mentioned the charge of civic irresponsibility lodged against defecting customers. We will encounter this rhetorical somersault again: being driven to extinction is not just a bad thing but morally wrong, sometimes – as with the music industry’s prosecution of its customers – criminally so. Yet the news media wasn’t averse to sleeping with the enemy. Many popular blogs today are associated with newspaper websites, for example, while the New York Times’ paywall discreetly displays orifices which can be penetrated through social media.

Such liaisons beg the question of what “news” actually is. The obvious answer: news is anything sold by the news business. In the current panic to cling to some remnant of the audience, this can mean anything at all. On the front page of the gray old Times, I’m liable to encounter a chatty article about frying with propane gas. CNN lavished hours of airtime on a runaway bride. The magisterial tones of Walter Cronkite, America’s rich uncle, are lost to history, replaced by the ex-cheerleader mom style of Katie Couric.

***

No part of the news business endured a more humiliating thrashing from the tsunami than the daily newspaper, which a century before had been the original format to make a profit by selling news to the public. True confession: I grew up reading newspapers. For half my life, this seemed like a natural way to acquire information. But that was an illusion based on monopoly conditions. Newspapers were old-fashioned industrial enterprises. Publishing plants were organized like factories. “All the news that’s fit to print” really meant “All the content that fits a predetermined chunk of pages.”

In substance, the daily newspaper was an odd bundle of stuff – from government pronouncements and political reports to advice for unhappy wives, box scores, comic strips, lots of advertisements, and tomorrow’s horoscope. Newspapers made tacit claims which collapsed under the pressure of the information tsunami. They pretended to authority and certainty, for example. But the fatal flaw was the bundling, because it became clear that we had entered on a great unraveling, that the tide of the digital revolution boiled and churned against such artificial bundles of information and “disaggregated”: that is, tore them apart.

(My 93-year-old mother has kept her subscription to the Washington Post strictly because she loves the crossword puzzles. I have shown her websites teeming with crossword puzzles, but she remains unmoved. My mother wants her bundle, and belongs to the last generation to do so.)

Information sought a less grandiose, less industrial level of circulation. The question was who or what determined that level. Every possible answer spelled misery for the daily newspaper, but the pathologies involved, I thought, reached far deeper than one particular mode of peddling information, and implicated the relationship between elites and non-elites, between authority and obedience. That passive mass audience on which so many political and economic institutions depended had itself unbundled, disaggregated, fragmented into what I call vital communities: groups of wildly disparate size gathered organically around a shared interest or theme.

These communities relied on digital platforms for self-expression. They were vital and mostly virtual. The topics they obsessed over included jihad and cute kittens, technology and economics, but the total number was limited only by the scope of the human imagination. The voice of the vital communities was a new voice: that of the amateur, of the educated non-elites, of a disaffected and unruly public. It was at this level that the vast majority of new information was now produced and circulated. The intellectual earthquake which propelled the tsunami was born here.

Communities of interest reflected the true and abiding tastes of the public. The docile mass audience, so easily persuaded by advertisers and politicians, had been a monopolist’s fantasy which disintegrated at first contact with alternatives. When digital magic transformed information consumers into producers, an established order – grand hierarchies of power and money and learning – went into crisis.

I have touched on the manner of the reaction: not worry or regret over lost influence, but moral outrage and condemnation, sometimes accompanied by calls for repression. The newly articulate public meanwhile tramped with muddy boots into the sacred precincts of the elites, overturning this or that precious heirloom. The ensuing conflict has toppled dictators and destroyed great corporations, yet it has scarcely begun.

I’d been enthralled by the astronomical growth in the volume of information, but the truly epochal change, it turned out, was the revolution in the relationship between the public and authority in almost every domain of human activity.

***

The story I want to tell is simple but has many conflicting points of view. It concerns the slow-motion collision of two modes of organizing life: one hierarchical, industrial, and top-down, the other networked, egalitarian, bottom-up. I called it a collision because there has been wreckage, and not just in a figurative sense. Nations which a little time ago responded to a single despotic will now tremble on the edge of disintegration. I described it as slow motion because the two modes of being, old and new, have seemed unable to achieve a resolution, a victory of any sort. Both engage in negation – it is as a sterile back-and-forth of negation that the struggle has been conducted.

So I am writing this book because I fear that many structures I value from the old way, including liberal democracy, and many possibilities glimmering in the new way, such as enlarging the circle of personal freedom, may be ground to dust in that sterile back-and-forth.

To tell my story I must use my own words, but if I am to communicate successfully with you, the reader, you must understand what I mean by them. Terms like the public and authority are not simple, and require much thinking about. A goal of this book is to flesh out the reality which these terms represent – yet, for obvious reasons, I can’t just spring their meaning at the end, like the punchline of a joke. Let me, instead, offer quick and dirty characterizations to get the story started, and we can see how these hold up as we go along.

First, the public. It’s a singular noun for a plural object. I usually refer to the public as “it,” but sometimes, in a certain context, as “them.” Whether one or the other is correct, I leave for grammarians to decide. Both fit.

We’ll explore later what the public is not. My understanding of what the public is I have borrowed entirely from Walter Lippmann. Lippmann was a brilliant political analyst, editor, and commentator. He wrote during the apogee of the top-down, industrial era of information, and he despaired of the ability of ordinary people to connect with the realities of the world beyond their immediate circle of perception. Such people made decisions based on “pictures in their heads” – crude stereotypes absorbed from politicians, advertisers, and the media – yet in a democracy were expected to participate in the great decisions of government. There was, Lippmann brooded, no “intrinsic moral and intellectual virtue to majority rule.”

Lippmann’s disenchantment with democracy anticipated the mood of today’s elites. From the top, the public, and the swings of public opinion, appeared irrational and uninformed. The human material out of which the public was formed, the “private citizen,” was a political amateur, a sheep in need of a shepherd, yet because he was sovereign he was open to manipulation by political and corporate wolves. By the time he came to publish The Phantom Public in 1927, Lippmann’s subject appeared to him to be a fractured, single-issue-driven thing.

The public, as I see it [he wrote] , is not a fixed body of individuals. It is merely the persons who are interested in an affair and can affect it only by supporting or opposing the actors. 2

Today, the public itself has become an actor, but otherwise Lippmann described its current structure with uncanny accuracy. It is not a fixed body of individuals. It is composed of amateurs, and it has fractured into vital communities, each clustered around an “affair of interest” to the group.

This is what I mean when I use the word “public.”

Now, authority, which is a bit more like beauty: we know it when we see it. Authority pertains to the source. We believe a report, obey a command, or accept a judgment, because of the standing of the originator. At the individual level, this standing is achieved by professionalization. The person in authority is a trained professional. He’s an expert with access to hidden knowledge. He perches near the top of some specialized hierarchy, managing a bureaucracy, say, or conducting research. And, almost invariably, he got there by a torturous process of accreditation, usually entailing many years of higher education.

Persons in authority have had to jump through hoops of fire to achieve their lofty posts – and feel disinclined to pay attention to anyone who has not done the same.

Lasting authority, however, resides in institutions rather than in the persons who act and speak on their behalf. Persons come and go – even Walter Cronkite in time retired to utter trivialities – while institutions like CBS News transcend generations. They are able to hoard money and proprietary data, and to evolve an oracular language designed to awe and perplex the ordinary citizen. A crucial connection, as I said earlier, exists between institutional authority and monopoly conditions: to the degree that an institution can command its field of play, its word will tend to go unchallenged. This, rather than the obvious asymmetry in voice modulation, explains the difference between Cronkite and Katie Couric.

With this rough sketch in hand, I’m ready to name names. When I say “authority,” I mean government – office-holders, regulators, the bureaucracy, the military, the police. But I also mean corporations, financial institutions, universities, mass media, politicians, the scientific research industry, think tanks and “nongovernmental organizations,” endowed foundations and other nonprofit organizations, the visual and performing arts business. Each of these institutions speaks as an authority in some domain. Each clings to a shrinking monopoly over its field of play.

***

The new age we have entered needs a name. While the newness of the age has been often remarked upon by many writers, and by now is almost a cliché, very little effort, strangely enough, has been invested in christening it. “Digital age” is lame, “digital revolution” better and I will use it it some contexts, but it implies change by means of a single decisive episode, and fails to communicate the grinding struggle of negation which I believe is the central feature of our time. An earlier candidate of mine, “age of the public,” I discarded for the same reason. The old hierarchies and systems are still very much with us.

So let me return to my original point of departure: information. Information has not grown incrementally over history, but has expanded in great pulses or waves which sweep over the human landscape and leave little untouched. The invention of writing, for example, was one such wave. It led to a form of government dependent on a mandarin or priestly caste. The development of the alphabet was another: the republics of the classical world would have been unable to function without literate citizens. A third wave, the arrival of the printing press and moveable type, was probably the most disruptive of all. The Reformation, modern science, and the American and French revolutions would scarcely have been possible without printed books and pamphlets. I was born in the waning years of the next wave, that of mass media – the industrial, I-talk-you-listen mode of information I’ve already had the pleasure to describe.

It’s early days. The transformation has barely begun, and resistance by the old order will make the consequences nonlinear, uncertain. But I think I have already established that we stand, everywhere, at the first moment of what promises to be a cataclysmic expansion of information and communication technology.

Welcome, friend, to the Fifth Wave.

1. See http://www2.sims.berkeley.edu/research/projects/how-much-info-2003. Chart and study data courtesy of Hal Varian.

2. Lippman, The Phantom Public (Transaction Publishers, Ninth Edition, 2009), p. 67.