A Libertarian Vision for the Funding of Science

We don’t need government to fund scientific research.

Until 1940, the United States inhabited a golden age of libertarian science, when under research laissez faire it became the richest, most technologically advanced, and most powerful country in the world. In 1940, however, it adopted the research dirigisme of the countries that were successively to lose World War II (France and Germany) and the Cold War (the Soviet Union).

Happily, however, since 1965, the federal government has been quietly pulling back its funding for research, and we are now about to embark on a second golden age of libertarian (or near-libertarian) science.

The First Golden Age

The first research golden age originated in the English Agricultural and Industrial Revolutions, which were the products of laissez faire in science. It culminated in the United States’ rise to global economic supremacy, which was also achieved under laissez faire in science. That first research golden age ended for Britain during the World War I and for the United States during World War II.

The roots of that first research golden age were nurtured by the Anglo-American love of freedom from the state, and its prophet was Adam Smith. In his 1776 book Wealth of Nations, Smith argued that governments need not fund scientific or technological research. Industry, he said, produced all the new technology it needed:

If we go into the workplace of any manufacturer and … enquire concerning the machines, they will tell you that such or such a one was invented by a common workman. 1

Moreover, Smith continued, pure science flowed out of the advances made in industry, not in the universities; and inasmuch as industry needed pure science, it produced all it needed. 2

France and the German states, on the other hand, defaulted into absolutism, and their science was funded and controlled by the state. Absolutism found its science-funding and science-directing prophet in Francis Bacon, who in his 1605 Advancement of Learning had argued that industrial technology depended on pure science, which only governments would fund.

Bacon’s so-called linear model thus proposed:

Whereas Smith’s model proposed:

Who Was Right, Smith or Bacon?

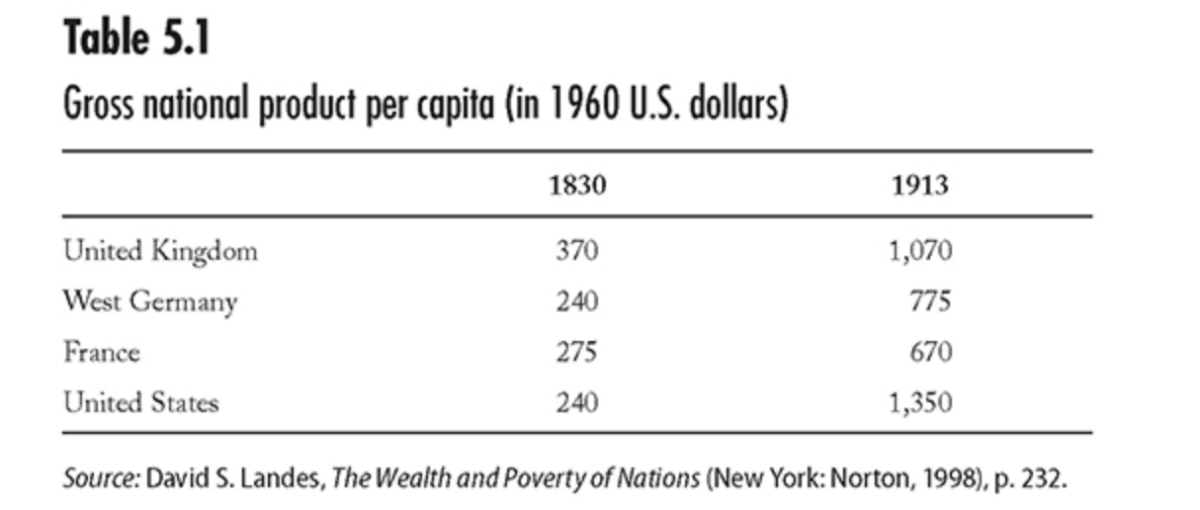

During the 18th, 19th, and early 20th centuries, the globe engaged in a vast experiment, the results of which are clear: economic growth came by research laissez faire. The globe’s two lead countries in succession were the United Kingdom (during the 19th century) and the United States (during the 20th century). So around 1890, U.S. gross domestic product (GDP) per capita overtook that of the UK, France, and the German states. However, France and the German states not only failed to overtake the UK (during the 19th century), they also failed even to converge on the UK’s GDP per capita. 3

The science policy lobby has, nonetheless, long propagated the myth that Germany overtook the UK economically during the 19th century, even though that myth has been repeatedly discredited. Table 5.1, for example, provides economic historian Paul Bairoch’s gross national product per capita data.

Bairoch’s data on the different national levels of industrialization are similar.

The economic history thus shows that research laissez faire—not dirigisme—correlates with increases in income, productivity, and wealth.

Federal Government Funding for Geopolitical Research during the Golden Age

In the decades following its founding in 1789, the federal government did subsidize some research, in that it created agencies whose missions might involve the funding of science (including the Library of Congress, 1800; the Office of the Coast Survey, 1807; the Office of the Surgeon General, 1818; the Army Medical Department, 1818; and the forerunner of the Naval Observatory, 1830). But those agencies’ research was limited to their geopolitical missions: the federal government did not fund science for its own sake; it funded only geopolitical science, and the federal government’s geopolitical ambitions were then limited.

The federal government’s first foray into science for its own sake was, unexpectedly, foisted on it by an Englishman, James Smithson, who in 1835 donated $550,000 in his will to found at Washington under the name of the Smithsonian Institution, an Establishment for the increase and diffusion of knowledge among men. 4

Because the money was gifted to the federal government, its acceptance was resisted by the defenders of states’ rights: thus, Senator John C. Calhoun from South Carolina said that the money “must be returned to the heirs,” while Senator William Campbell Preston, also from South Carolina, asserted that “every whippersnapper vagabond … might think it proper to have his name distinguished in the same way.” 5

Moreover, Andrew Johnson of Tennessee, who was then in the House of Representatives, was outraged when Congress voted not only to accept the donation but also to supplement it with the taxpayers’ money, which he denounced as picking the “pockets of the people.” Smithson’s money was nonetheless accepted, and the pockets of the people were duly picked. (Today, the Smithsonian receives just over half its annual income of $1.5 billion from the federal government as an appropriation from the taxpayers. 6 )

Agriculture

The largest research-associated mission the federal government adopted in peacetime before 1940 was agriculture. American agriculture had long been bedeviled by overproduction, and as economic historian Eric Jones wrote in his classic book The European Miracle:

European farming methods were preternaturally productive in the New World. Time and again European travellers complained that American farmers wasted manure. Their dung heaps rose to tower over the red Palatine barns of the colonies; why were they not spread on the land? 7

They were not spread on the land because farmers had access to too much virgin land, so they produced too much food. Consequently, spreading the manure would not have been a cost-effective activity. Moreover, the private sector—whether in the form of Eli Whitney’s cotton gin (1793), Cyrus McCormick’s mechanical reaper (1831), or Joseph Glidden’s barbed wire (1874)—continued to transform productivity. And because of the overproduction, farmers were poor, so they looked to the federal government for relief—which Representative Justin Morrill (R-VT) sought to provide.

Morrill was a follower of Henry Clay’s American Way, which was in its turn based on the dirigisme Alexander Hamilton was to elaborate in his “Report on Manufactures” of 1791, and he supposed that governments should overrule markets and manage economies. And in 1859, Morrill piloted a land-grant college bill through Congress, because he thought the farmers’ poverty spoke of market failure. Yet then-president James Buchanan believed in markets (how would subsidizing its education solve agriculture’s overproduction?), as well as in crowding out, so he vetoed the bill:

This bill will injuriously interfere with existing colleges … [that] have grown up under the fostering care of the states and the munificence of individuals.… What the effect will be on these institutions of creating an indefinite number of rival colleges sustained by the endowment of the federal government is not difficult to determine. 8

Only with the election of Abraham Lincoln, who, like Morrill, was a follower of Henry Clay, were the land-grant colleges established in 1862. Yet American agriculture is still bedeviled by the problem of overproduction.

War, the Episodic Federal Mission

It was also under Lincoln that, in 1863, the National Academy of Sciences (NAS) was incorporated as a military initiative to help build the ironclads and other technologies the North needed to help win its war. But once that war was settled, Congress found no more use for the NAS, which it did not dismantle but instead proceeded to ignore. And that was to be the story of the federal government’s funding of military science—until 1940: federal support would be intense during wartime, followed by its neglect upon the resumption of peace.

There was, consequently, a brief revival of government research funding for military purposes during World War I, when institutions such as the Naval Consulting Board (chaired by Thomas Edison) were created, but—again—most such institutions were soon allowed to die after 1919. That holds true in the United States but not in the UK, because Britain abandoned laissez faire in science after WWI, and its government started to fund research. In contrast, the federal government in Washington did not.

U.S. Research in 1940

By 1940, therefore, the government was funding only 23 percent of American research and development (R&D), half of which went to agriculture (still facing overproduction) and half of which went to defense (which has only about a tenth of the economic value of civil research 9 ). The federal government’s R&D was, therefore, economically marginal.

The remaining 77 percent of U.S. R&D was supported by the private sector (industry, universities’ own resources, and foundations). Yet by 1940, the United States had been the richest—and thus the most technologically advanced—country in the world for some 50 years, and it had produced the Wright brothers, Thomas Edison, and Nikola Tesla, among other extraordinary technologists. The purest of scientists, too, had flourished without the government; Albert Einstein, for example, worked at Princeton with private funds between 1933 and his death in 1955.

The market and civil society thus fully met the United States’ research needs before 1940.

The Truman Doctrine and the Federal Funding of Science for Its Own Sake

It was, as ever, for military reasons that in June 1941, the federal government created a new science body, the Office of Scientific Research and Development (OSRD), which four years later employed some 6,000 scientists on programs ranging from the Manhattan Project to antibiotics.

Yet by July 1945, with the threat of peace looming, the OSRD feared the apparently inevitable demobilization. So that month, its director, Vannevar Bush, published the book Science: The Endless Frontier to argue that OSRD’s researchers should continue to enjoy their salaries and jobs because—he claimed—the federal government’s funding of science would build economic growth in peacetime the way it had built atom bombs in wartime.

It is now generally forgotten that few people believed Bush’s economic arguments (which is why, contrary to myth, Science: The Endless Frontierdedicated most of its space to the military applications of research). In those days, people understood that the United States had grown under laissez faire in research, and people generally dismissed arguments for government funding of science as only special pleading by scientists and by corporations looking for corporate welfare. That was why upon assuming office Franklin Roosevelt cut back on government-funded research: by 1934, he’d cut the Agriculture Department’s research budget (the federal government’s largest research budget) from $21.5 million annually to $16.5 million (at the cost of 567 jobs in 1934 alone), and he more than halved the Bureau of Standards’ research budget, from $3.9 million (1931) to $1.8 million (1934). But after 1945, the United States was about to go on a permanent war footing.

In his Farewell Address, George Washington had warned against “permanent alliances” and against “excessive partiality for one foreign nation, and excessive dislike for another” (often summarized as his warning against “foreign entanglements”). And until 1947—short wars excepted—that had remained U.S. policy (cf. the United States’ refusal to join the League of Nations). Yet in 1947, the British, nearly bankrupt, suspended Pax Britannicaand prepared to abandon Greece and Turkey to Soviet incursions. Whereupon President Harry Truman codified his doctrine (strengthened in 1948 and transformed into membership in the North Atlantic Treaty Organization in 1949), which might be summarized as Pax Americana: America, like Britain before it, was now permanently at war with international transgressors.

Modern war needs science, and modern war needs scientists. In 1942, 1943, and 1945, the U.S. Senate Subcommittee on War Mobilization held hearings on America’s shortage of wartime scientists (the peacetime complement had been fully adequate for peace but not for war). And that subcommittee’s chair, Harley M. Kilgore, subsequently led the congressional campaign for peacetime science. In 1948, therefore, the federal government’s long-standing but modest mission in public health was expanded into the National Institutes of Health (NIH). And in a dramatic break with the past, the National Science Foundation (NSF) was created in 1950 to fund solely academic science.

Truman did not conceive of the NSF as a producer of science but of scientists, who—having been trained—would be deployed into defense. That is why in 1947 he vetoed the first NSF bill, because it met only the aspirations of researchers (its director, for example, was to be appointed by the scientists and trustees of the NSF itself, and its grants were to be distributed by peer review). But Truman wanted a geopolitical science, and in the words of his veto:

This bill contains provisions which represent such a marked departure from sound principles for the administration of public affairs that I cannot give it my approval. It would in effect vest the determination of vital national policies, the expenditure of large public funds, and the administration of important government functions in a group of individuals who would essentially be private citizens. The proposed National Science Foundation would be divorced from control by the people to an extent that implies a distinct lack of faith in democratic processes. 10

But by 1950, with the Cold War heating up, Truman accepted a compromise NSF: the president of the United States would appoint its CEO, but the NSF would distribute research funds to the universities by peer review, whence young researchers would emerge trained for military purpose.

Deforming the Universities

Before 1940, most research in the United States was performed where it was economically relevant, namely, within industry. The universities, then, were primarily liberal arts colleges—they were essentially institutions for teaching and scholarship and for speaking truth unto power—so they had to be inducted into applying for government grants. Thus did Fred Stone of the NIH, for example, tell how during the 1950s “it wasn’t anything to travel 200,000 miles a year” to help the universities create and submit grant applications. 11 That thus imperiled the universities’ autonomy and therefore their academic freedom.

In his famous 1961 farewell speech, President Dwight D. Eisenhower—who for five years had been president of Columbia University—linked the threats the “military-industrial complex” posed on society with the threats the “scientific-technological elite” posed to the universities:

A steadily increasing share [of research] is conducted for, by, or at the direction of, the Federal government [so] the free university, historically the fountainhead of free ideas and scientific discovery, has experienced a revolution in the conduct of research. Partly because of the huge costs involved, a government contract becomes virtually a substitute for intellectual curiosity.…

The prospect of domination of the nation’s scholars by Federal employment, project allocations, and the power of money is ever present—and is gravely to be regarded.

Yet in holding scientific research and discovery in respect, as we should, we must also be alert to the equal and opposite danger that public policy could itself become captive of a scientific-technological elite. 12

But Eisenhower was to be outflanked by two key papers.

Inventing a New Economics of Science

As late as 1942, it was possible for Austrian political economist Joseph Schumpeter to argue that markets were self-sufficient in research (“industrial mutation incessantly revolutionizes the economic structure from within” 13 ), but ideas of industrial self-sufficiency did not satisfy the geopolitical ideologies of the 1940s. So new ideas had to be found. Enter RAND.

To promote his idea of an NSF, Vannevar Bush joined with the U.S. Air Force and the Douglas Aircraft Company in 1945 to help create Project RAND (Research ANd Development; now the RAND Corporation), one mission of which was to lobby for federal funding of science for geopolitical reasons. Bush, the U.S. Air Force, and Douglas were of course highly self-interested, but nonetheless RAND got its money. To secure it indefinitely, RAND invested in an economics-of-R&D project to … well, let RAND’s own historian continue the narrative:

RAND’s economics-of-R&D project also yielded two of the foundational papers in the field: Richard Nelson’s “The Simple Economics of Basic Scientific Research” and Kenneth J. Arrow’s “Economic Welfare and the Allocation of Resources for Invention.” …

Nelson’s and Arrow’s papers provided appealing economic theories as to why the nation would systematically underinvest in basic research. Their theories had clear policy implications: the U.S. government should invest more in basic research owing to “market failures” in the private sector. These theories have been largely internalized within the now dominant neoclassical economic tradition. 14

So a self-interested RAND sponsored the research that argued that science was a public good. 15

Science Installed as a Public Good

In 1954, economist Paul Samuelson published a key paper in which he started to formalize the concept of a public good as being (a) nonexcludable (i.e., a public good couldn’t be limited to one person) and (b) nonrivalrous (i.e., one person’s use of a public good need not stop someone else from using it). 16 Such a 1954 description was ready-made for Nelson and Arrow in 1959 and 1962, respectively, to argue that science was a public good, because science is (a) published and (b) one person’s use of a scientific idea (the laws of thermodynamics, say) does not preclude another person from using it.

Yet science is transparently not a public good. 17 When Edwin Mansfield and colleagues, for example, examined 48 products that had been copied within the major industries of New England during the 1970s, they reported the costs of copying were on average 65 percent of the costs of innovation. 18 The reason was the copiers had to rediscover for themselves the tacit knowledge embedded in the original innovation, a rediscovery so laborious that in some cases, Mansfield and his colleagues found that copying an innovation cost the copiers more than it had cost the original innovators. Moreover, Levin and colleagues’ survey of 650 R&D managers provided similar results for the costs of industrial copying. 19

Yet Mansfield, Levin, and their colleagues had reported only the marginal costs of the actual copying. And Rosenberg 20 and Cohen and Leventhal 21 have shown that companies seeking success in the market need first to sustain the fixed costs of a research staff whose activities are directed toward maintaining their own expertise (in pure science as well as in applied science). And since Griliches 22 and Mansfield 23 have shown that—contrary to myth—a positive correlation exists between the amount of pure science that companies publish and their profits (i.e., pure science is profitable for private industry), those costs will not be trivial.

As economist George Stigler has shown, moreover, companies also need to bear the costs of information; 24 and they also need to bear the costs of failed imitation attempts. We may therefore not know for certain what the average costs of copying in industry are, but they appear to be so high that research, in industrial practice, is excludable in the sense that it is not available to free riders.

Another Natural Experiment

Nonetheless, following the publication of the Nelson and Arrow papers, the federal government’s support for R&D surged, and by 1964, the federal government was funding 67 percent of all U.S. research: companies wanting to do research would write grant applications, as if they were charitable not-for-profit foundations needing public support. And the economic consequences were … zero. The long-term rate of U.S. GDP per capita growth did not rise.

That was an inconsequence seen globally. Thus in 2007, on reviewing the literature on R&D, Leo Sveikauskas of the U.S. Bureau of Labor Statistics concluded:

The overall rate of return to R&D is very large.… [B]ut, these high returns apply only to privately financed R&D [in industry]. 25

In 2003, with regard to using a different methodology and having studied the growth rates of the 21 leading world economies between 1971 and 1998, the Organization for Economic Cooperation and Development (OECD)—which is an intergovernmental economics research unit—had also found:

Business-performed R&D … drives the positive association between total R&D intensity and output growth.… The negative results for public R&D are surprising and deserve some qualification. Taken at face value they suggest publicly funded R&D crowds out … private R&D. 26

Even earlier, Walter Park of the American University in Washington, DC, and I had independently made the same discovery, namely, that the public funding of research crowds out its private funding. 27

The vast expansion in NIH funding also seemed to be inconsequential, and on June 15, 1966, upon launching Medicare, President Lyndon Johnson complained that the “hundreds of millions of dollars that have been spent on laboratory research” had apparently yielded no benefit to patients. “Presidents, in my judgment, need to show more interest in what the specific results of medical research are.” 28

An Open Secret

Discreetly, governments have now recognized that their funding of research is economically irrelevant. Thus in 1981, the public sector across the OECD funded, on average, 44.2 percent of R&D, whereas it now funds only 28.3 percent of R&D, 29 and the total continues to fall. A recent survey across the major research countries of the OECD has “documented a major trend across the most advanced countries: a systematic retreat of public R&D compared to R&D financed by industry.” 30 And in a classic example of crowding out, from 1981 to 2013, publicly funded R&D among the lead OECD countries fell from 0.82 percent to 0.67 percent of GDP, whereas industry-funded R&D rose from 0.96 percent to 1.44 percent of GDP. That is, a fall of 0.15 percent of GDP in publicly funded R&D has provoked a rise in industry-funded R&D of 0.48 percent.

I call this an “open secret,” because the facts are publicly available, but hardly anyone talks about them: the suggestion that governments need not fund research is simply not welcome, so the downsizing of the governments’ support for it has been done with minimal public debate.

U.S. Government—Again—Funding Mission Research Only—Hurrah!

Just as in 1940, the federal government is again funding only 23 percent of U.S. R&D, only for specific missions. Thus, half of the federal government’s annual outlay of $122 billion for R&D is on defense ($64 billion), with $30 billion going to health, $11 billion to space, and $11 billion to energy. And those missions of defense, health, and space and of reducing our energy dependence on fossil fuels from the Middle East clearly enjoy popular support.

The federal government’s only apparent nonmission research is the annual $5.5 billion for the NSF, but that too is mission based. Its purported mission is to fund the basic science that, in the so-called linear model, is the claimed origin of the applied science that industry needs. But the linear model is now utterly discredited among economists of science and policymakers, which is another open secret that is rarely discussed publicly. 31 In fact, the real mission of the NSF’s $5.5 billion is to buy off a powerful lobby that would otherwise clog the op-ed pages of the newspapers on both sides of the aisle with the claim that the federal government’s neglect of university science would destroy the economy. And at $5.5 billion annually, it’s a trivial cost.

However, a legitimate justification exists for the government funding of science, even though it is 100 percent and 360 degrees opposed to the conventional justification: namely, to challenge—not support—industry. We know, for example, that cigarettes cause lung cancer, not because of the assiduous research of the tobacco companies but because of Adolf Hitler. The Führer was a teetotaling, tree-hugging, eugenicist vegan (he bought into the whole progressive package circa 1933), and among his obsessions was a conviction that cigarette smoking had to be harmful. Upon assuming power, therefore, Hitler instructed his epidemiologists to find the evidence, which they did. 32 (Physician Richard Doll in Britain later claimed credit for the discovery, which had not spilled over widely from the Nazi medical literature, but Doll had been one of the few Britons to have discreetly read the original research reports.)

But even if the government funding of science is healthy because it challenges industry, it nonetheless carries its own risk, namely, crowding out philanthropic research. During the 19th century and the first half of the 20th century, philanthropists in the United States and the UK funded research with marked generosity, yet that funding fell away postwar, after governments stepped in. As Martha Peck, executive director of the Burroughs Wellcome Fund said in 1993, “We’ve seen foundations turn away from research … the perception has been that science is getting it from other sources.” 33

The falling away of government support for R&D has since seen, however, a happy revival of philanthropic research money. And just as the Wellcome Trust (founded in 1936; current endowment £23.2 billion) was once the largest charity of any type on the globe, that palm now goes to the Bill and Melinda Gates Foundation (founded in 2000; current endowment $44.3 billion).

Conclusion

The growth and survival of the myth that science is a public good were fostered by (a) the scientists themselves, who preferred to work on their own agendas at the public’s expense rather than to industry’s direction at the shareholders’ expense; (b) industrialists, who are always on the lookout for handouts from the federal government; (c) the politicians, who see research as an inexpensive way of portraying themselves as latter-day Medicis supporting latter-day Galileos (witness Bill Clinton’s claiming in 2000, on behalf of the federal government, the credit for the first draft of the sequencing of the human genome, even though most of its cost had been borne by the Wellcome Trust, a British charity, and Craig Venter’s Celera, a for-profit company); and (d) the general public, who like the idea of science being a democratically accountable popular activity.

But the myth was always doubted by presidents (including Andrew Johnson, James Buchanan, FDR, Harry Truman, Ike, and LBJ), who privileged evidence over theory. Also discreetly skeptical have been the discrete denizens of treasuries across the OECD.

In 1994, I predicted that the welfare state’s ever-increasing pressure on public funds would cause governments, globally, to cut their budgets for R&D, which would be wholly good, because the subsequent rise of private money would more than compensate for the public sector’s retraction. 34 For my pains I received little but abuse, 35 yet the prediction has come to be more than good. 36 We are embarked on a second research golden age.

Consider space exploration, which started in Massachusetts with Robert Goddard (1882–1945). Goddard, a professor at Clark University, developed the first modern rockets (liquid fuel, 1925; gyro stabilizer, 1932; altitude reaching 9,000 feet, 1937). Those developments were funded privately by the Guggenheims and Hodgkins as not-for-profit ventures. But in view of the geopolitics, the state—in the shape of the Nazis, the Soviets, and the National Aeronautics and Space Administration (NASA)—would appropriate Goddard’s work and supplant the funding of the foundations. Today, however, thanks to entrepreneurs like Elon Musk, Jeff Bezos, and Richard Branson, space exploration is about to enter its third funding regime, namely, that of the private for-profit sector. Under those circumstances, should the taxpayer not challenge the continued funding of NASA?

Or consider molecular biology. Its most exciting current development is CRISPR-Cas9 (clustered regularly interspaced short palindromic repeats–CRISPR-associated protein 9), which is a genome editing tool that can edit DNA with almost magical precision. But its discovery owed little or nothing to the centrally planned model of Francis Bacon and everything to the entrepreneurialism Adam Smith proselytized. In the words of Eric Lander of the Massachusetts Institute of Technology:

Breakthroughs often emerge from completely unpredictable origins. The early heroes of CRISPR were not on a quest to edit the human genome—or even to study human disease. Their motivations were a mix of personal curiosity (to understand bizarre repeat sequences in salt-tolerant microbes), military exigency (to defend against biological warfare), and industrial application (to improve yogurt production). 37

Thus did CRISPR—which may prove revolutionary in the evolution of all the organisms we value (including ourselves)—emerge as something of an afterthought when yogurt manufacturers picked up on loose ends left over by biological warriors who had themselves picked up on loose ends left over by saltwater microbiologists. This process is best left to competition between the marketplace, civil society, and geopolitical defense research; it cannot be planned.

Or consider electronics, which emerged from the marketplace, not from government-directed labs. Michael Faraday discovered electromagnetic induction in 1831 at the privately funded Royal Institution; in 1883, working in his private laboratory at Menlo Park, New Jersey, Thomas Edison discovered the thermionic effect that underlay the development of diodes and triodes; William Shockley invented the semiconducting transistor circa 1950 while working at the private Bell Labs. Today, with Amazon spending $22.6 billion on research in 2017 and Alphabet $16.6 billion (and Microsoft $14.7 billion, 2018; Facebook $10.2 billion, 2018; Intel $13.1 billion, 2017; Apple $11.6 billion, 2017; Oracle $6.3 billion, 2017; Cisco $6.1 billion, 2017; Qualcomm $5.5 billion, 2017; IBM $5.4 billion, 2017), that industry’s future in the care of the private sector remains assured. 38

Adam Smith had few illusions about industry. He may have described the division of labor as the source of wealth, yet he nonetheless knew it failed us as humans:

In the progress of the division of labour, the employment of the far greater part of those who live by labour, that is, the great body of the people, comes to be confined to a few very simple operations, frequently to one or two.

In consequence, Smith wrote:

The man whose whole life is spent performing a few simple operations … generally becomes as stupid and ignorant as it is possible for a human creature to become.… His dexterity at his own particular trade seems … to be acquired at the expense of his intellectual, social, and martial virtues. But in every improved and civilized society this is the state into which the labouring poor, that is, the great body of the people, must necessarily fall, unless government takes some pains to prevent it. 39

Those pains had to include the government’s provision of education, Smith concluded, because the stupid and ignorant human creatures that industry generated could not be entrusted with the schooling of their offspring.

Equally, Smith would have endorsed the government’s funding of science to monitor potential threats from industry, such as smoking, but he would have been unsurprised that the past two centuries have confirmed his argument of 1776: research for economic growth and curiosity should be entrusted solely to the market and to the voluntary institutions of civil society.

1. Adam Smith, An Inquiry into the Nature and Causes of the Wealth of Nations, Bk. 1, Chap. 1 (London, 1776).

2. Walter Eltis, “The Contrasting Theories of Industrialization of François Quesnay and Adam Smith,” Oxford Economic Papers 40, no. 2 (June 1988): 269–88.

3. Maddison Project Database 2018, 1990 benchmark; Jutta Bolt et al., “Rebasing ‘Maddison’: New Income Comparisons and the Shape of Long-Run Economic Development,” Maddison Project Working Paper no. 10, January 2018.

4. The Smithsonian Institution, Our History, p. 1. Accessed April 2019.

5. Marlana Portalana, The Passionate Empiricist: The Eloquence of John Quincy Adams in the Service of Science State (University of New York Press: Albany, 2009) p. 109.

6. The Smithsonian Institution, “2017 Annual Report”.

7. Eric Jones, The European Miracle: Environments, Economies and Geopolitics in Europe and Asia, 3rd ed. (Cambridge, UK: Cambridge University Press, 2003), p. 218.

8. James Buchanan, “Veto Message Regarding Land-Grant Colleges,” Miller Center, University of Virginia, Charlottesville, February 24, 1859.

9. Advisory Council on Science and Technology, Developments in Biotechnology (London: HMSO, 1990).

10. Cited in United States Congress House Committee on Science and Technology Task Force on Science, “A History of Science Policy in the United States, 1940-1985,” Science Policy Study Background Report No. 1 (1986), p. 30.

11. Paula Stephan, “The Endless Frontier: Reaping What Bush Sowed,” in The Changing Frontier: Rethinking Science and Innovation Policy, ed. Adam B. Jaffe and Benjamin F. Jones (Chicago: University of Chicago Press, 2015), pp. 321–66.

12. Dwight D. Eisenhower, “Farewell Radio and Television Address to the American People,” January 17, 1961.

13. Joseph Schumpeter, Capitalism, Socialism and Democracy (1942; repr., London: Routledge, 1994), p. 83 (emphasis in original).

14. David Hounshell, “The Cold War, RAND, and the Generation of Knowledge, 1946–1962,” Historical Studies in the Physical and Biological Sciences 27, no. 2 (1997): 237–67, repr. RAND Corporation, 1998, pp. 27–58.

15. Richard R. Nelson, “The Simple Economics of Basic Scientific Research,” Journal of Political Economy 67, no. 3 (June 1959): 297–306; Kenneth J. Arrow, “Economic Welfare and the Allocation of Resources for Invention,” in The Rate and Direction of Inventive Activity: Economic and Social Factors (Princeton, NJ: Princeton University Press, 1962), pp. 609–26.

16. Paul A. Samuelson, “A Pure Theory of Public Expenditure,” Review of Economics and Statistics 36, no. 4 (November 1954): 387–89.

17. Terence Kealey and Martin Ricketts, “Modelling Science as a Contribution Good,” Research Policy 43, no. 6 (July 2014): 1014–24.

18. Edwin Mansfield, Mark Schwartz, and Samuel Wagner, “Imitation Costs and Patents: An Empirical Study,” Economic Journal 91, no. 364 (December 1981): 907–18.

19. Richard C. Levin et al., “Appropriating the Returns from Industrial Research and Development,” Brookings Papers on Economic Activity 18, no. 3 (1987): 783–820.

20. Nathan Rosenberg, “Why Do Firms Do Basic Research (with Their Own Money)?” Research Policy 19, no. 2 (April 1990): 165–74.

21. Wesley M. Cohen and Daniel A. Levinthal, “Innovation and Learning: The Two Faces of R&D,” Economic Journal 99, no. 397 (1989): 569–96.

22. Z. Griliches, “Productivity, R&D and Basic Research at Firm Level in the 1970s,” American Economic Review 76, no. 1 (1986): 141–54.

23. Edwin Mansfield, “Basic Research and Productivity Increase in Manufacturing,” American Economic Review 70, no. 5 (1980): 863–73.

24. George J. Stigler, “The Economics of Information,” Journal of Political Economy 69, no. 3 (June 1961): 213–25.

25. Leo Sveikauskas, “R&D and Productivity Growth: A Review of the Literature,” Bureau of Labor Statistics Working Paper no. 408, September 2007, pp. 1, 32(emphasis in original).

26. OECD, The Sources of Economic Growth in OECD Countries (Paris: OECD, 2003), pp. 84-85.

27. Walter Park, “International R&D Spillovers and OECD Economic Growth,” Economic Inquiry 33, no. 4 (October 1995): 571–90; Terence Kealey, “The Economic Laws of Research,” Science and Technology Policy 7 (1994): 21–27.

28. United States Congress House Committee on Science and Technology Task Force on Science, p. 54.

29. Daniele Archibugi and Andrea Filippetti, “The Retreat of Public Research and Its Adverse Consequences on Innovation,” Technological Forecasting and Social Change 127 (February 2018): 97–111.

30. Archibugi and Filippetti, “The Retreat of Public Research.”

31. David Edgerton, “The ‘Linear Model’ Did Not Exist: Reflections on the History and Historiography of Science and Research in Industry in the Twentieth Century,” in The Science-Industry Nexus: History, Policy, Implications, ed. Karl Grandin and Nina Wormbs (New York: Watson, 2004), pp. 1–36.

32. Robert N. Proctor, “The History of the Discovery of the Cigarette–Lung Cancer Link: Evidentiary Traditions, Corporate Denial, Global Toll,” Tobacco Control 21, no. 2 (2012): 87–91.

33. Quoted in David Dickson, “Charities Taking the Strain,” Nature 364 (1993): 742–44.

34. Kealey, “Economic Laws of Research,” 245–46.

35. Paul David, “From Market Magic to Calypso Science Policy: A Review of Terence Kealey’s Economic Laws of Scientific Research,” Research Policy 26, no. 2 (1997): 229–55.

36. Archibugi and Filippetti, “The Retreat of Public Research.”

37. Eric Lander, “The Heroes of CRISPR,” Cell 164, no. 1 (January 2016): 18–28.

38. Rani Molla, “Amazon Spent Nearly $23 Billion on R&D Last Year—More than Any Other U.S. Company,” Recode, April 9, 2018.

39. Smith, Wealth of Nations. Bk. 5, Chap. 1.